I Accidentally Outsourced My Own Curiosity

A short story about effort, ego, and getting lapped by software

This is not the blog post I meant to write.

Well—to the casual fan, the data geek, or the TV savant, it might look exactly like the post I planned (especially if you check out the bonus content).

Let’s rewind a couple nights…

I’m mid–pork chop watching season 2 of Landman.

Recently, I’ve been deep in the Taylor Sheridan–verse, ironically having watched almost everything except Yellowstone.

And somewhere between bites, it hits me: why do I recognize this guy?

Not in a “that guy from that thing” way—but in a very specific way. Like how HBO once made it impossible to see Wendell Pierce without thinking of Baltimore Police and Mardi Gras.

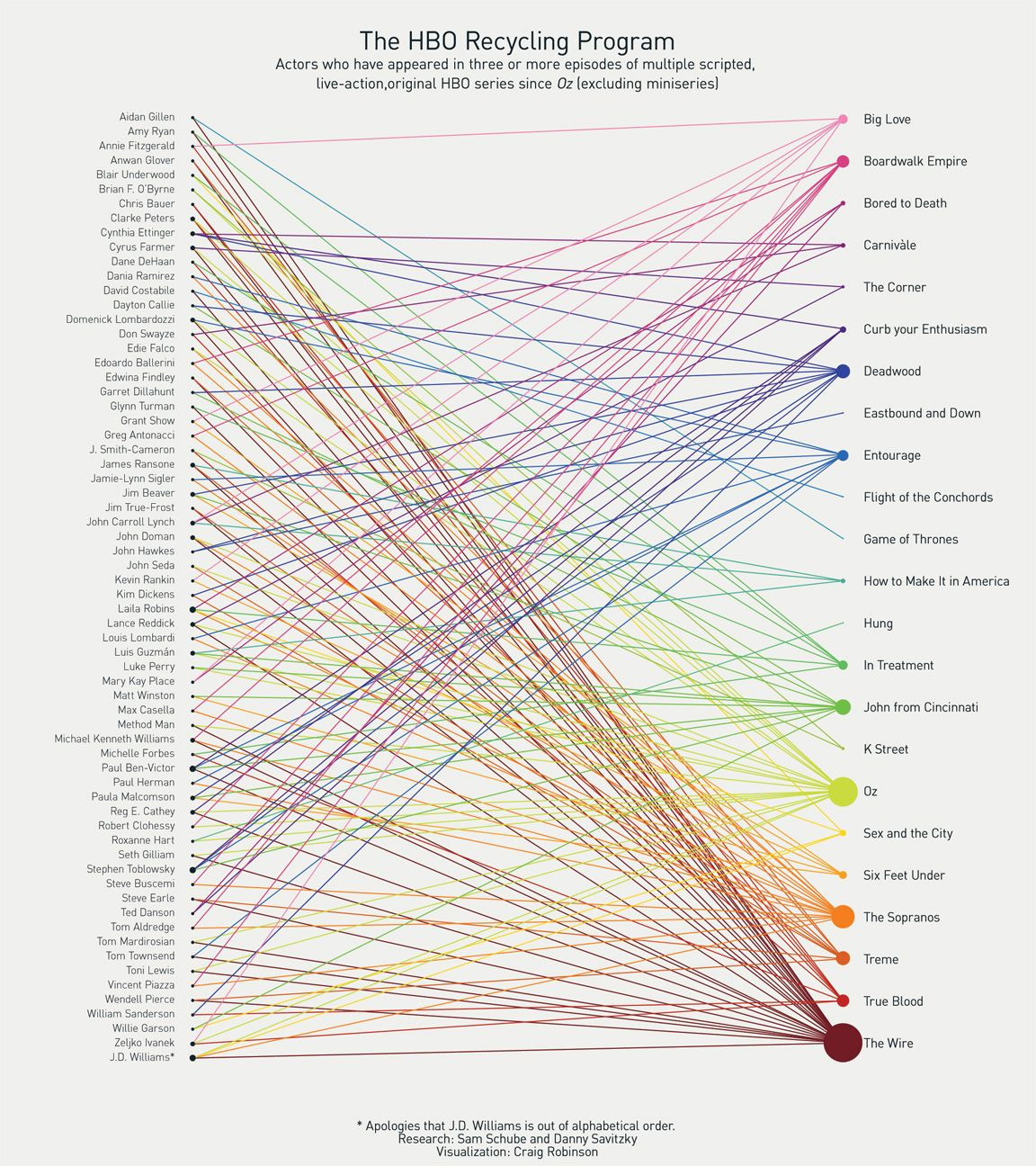

Which immediately unlocked one of my favorite internet artifacts of all time: Andy Greenwald’s Grantland piece: The HBO Recycling Program which touches on repeated actors across HBO shows. A perfect marriage of culture, data, and a visual that permanently rewired how I watched television.

That’s when it hit me:

I should build this for the Taylor Sheridan-verse.

You can probably guess what happened next.

I Did the Thing I Always Do

I pumped the idea into ChatGPT.

My prompt:

This is one of my favorite articles ever. I want to rebuild it but with a different use case:

Actors across Taylor Sheridan TV shows and Movies.

I want to use AI to assemble the dataset, validate the dataset, write a comparable write up and most importantly code an interactive graphic of the image in this that links actors to shows in a visual fashion.And, to its credit, ChatGPT did what it does best: it thought about it very carefully and gave me a instructions that would’ve taken me a couple nights to execute.

It was thoughtful. Measured. Responsible.

Then I had a slightly dangerous question...

How would other models handle this?

Claude has been having a moment lately. People talk about Claude like it’s a personality trait. Claude Code this, Claude Code that.

I’d also recently re-upped my Gemini subscription1.

So what will happen if I give the exact same prompt to Gemini and Claude?

Same article. Same prompt.

So I did.

And that’s where everything went sideways.

What Actually Happened

ChatGPT gave me a plan.

Gemini… just did it.

Claude… also just did it—but in a way that felt like it assumed I owned a local development environment and strong opinions about file structures.

Gemini returned:

A full article

A dataset

A working interactive visualization

All rendered directly in-app, like a magician who refuses to explain the trick

Claude returned:

A multi-file project

A D3-based interactive HTML visualization

A longform article

A validation script

A README

Which meant moments after beginning this quest, the entire project I’d planned to spend the week on… was finished for me.

They Weirdly All Read the Article the Same Way

Here’s what I found most interesting.

All three models independently understood the Grantland piece in the same way:

Cold open anchored to recognition

Clear inclusion rules to avoid IMDb soup

A small set of “poster children” actors

A single visual that does most of the narrative work

They even wrote nearly identical hooks2:

Gemini: “From the moment James Jordan appeared on screen in Special Ops: Lioness…”

Claude: “From the moment James Jordan made his debut on Yellowstone…”

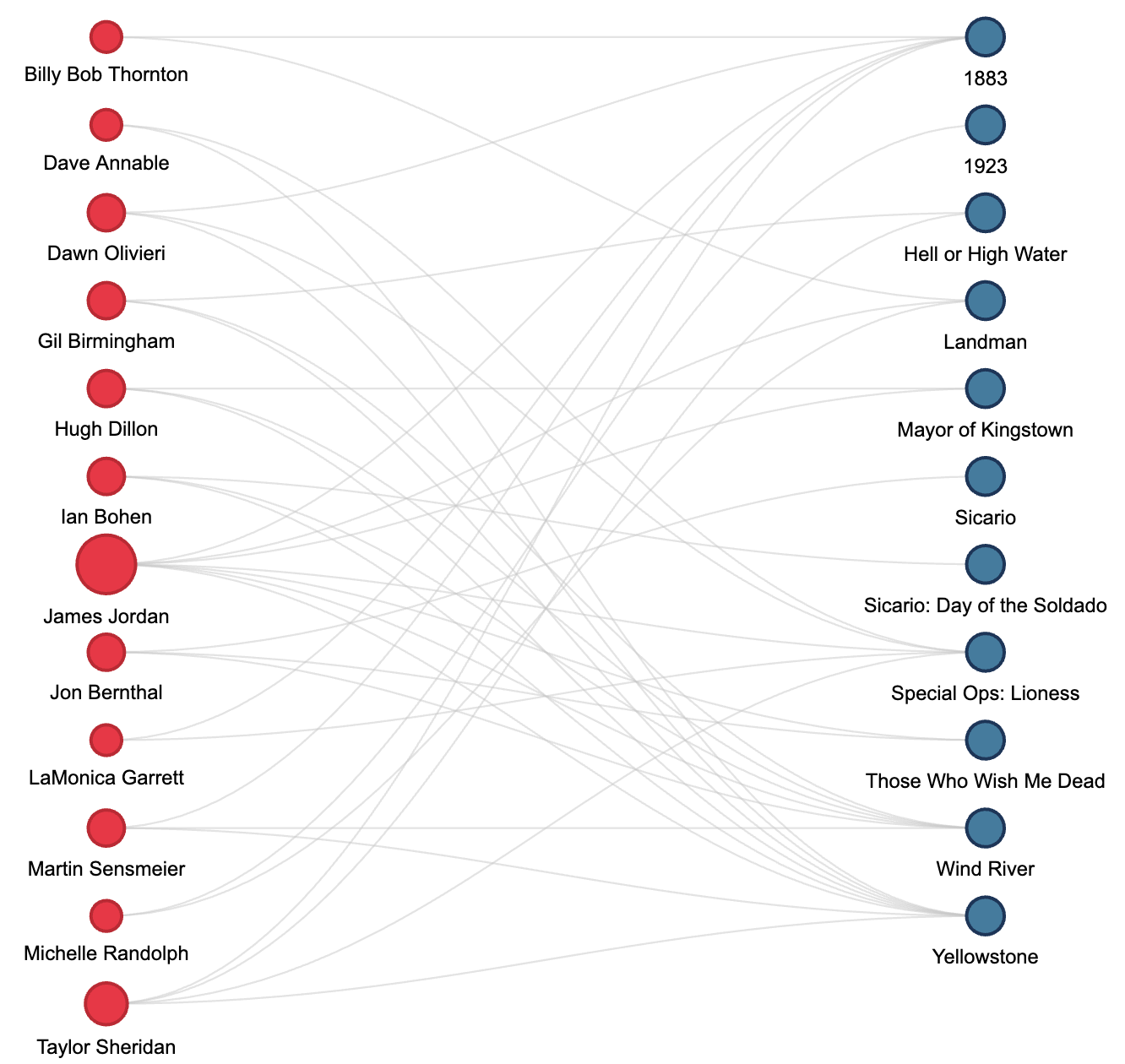

Both models defaulted to a network graph—nodes floating freely, lines crisscrossing in every direction. Technically incorrect. Visually interesting.

But not what I wanted.

The Grantland magic was the rigidity3:

Actors on the left

Shows on the right

Clean, legible connections

So I told both Gemini and Claude, in a single follow-up prompt, to fix the visualization to match that exact layout.

They both did. Immediately.

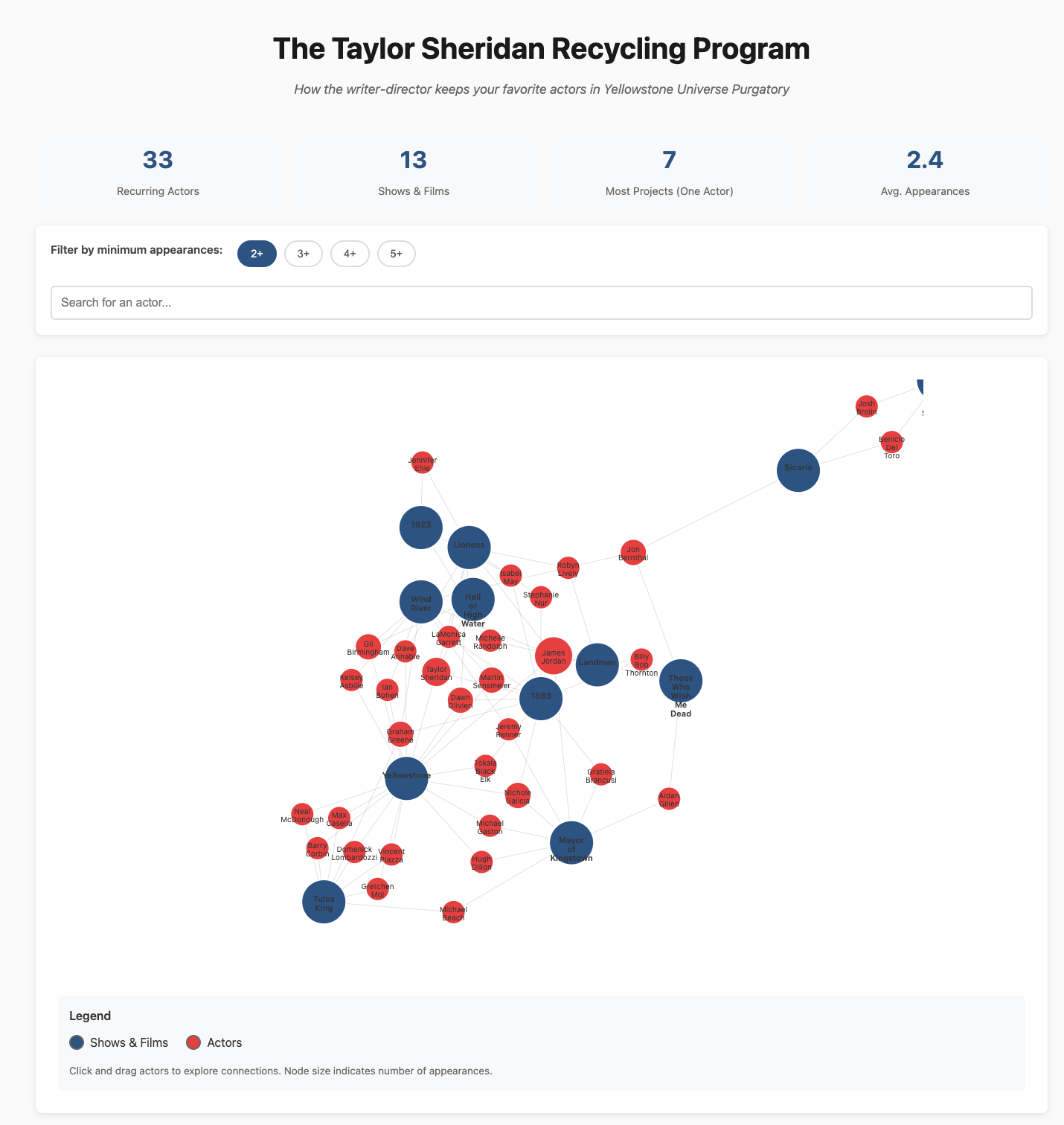

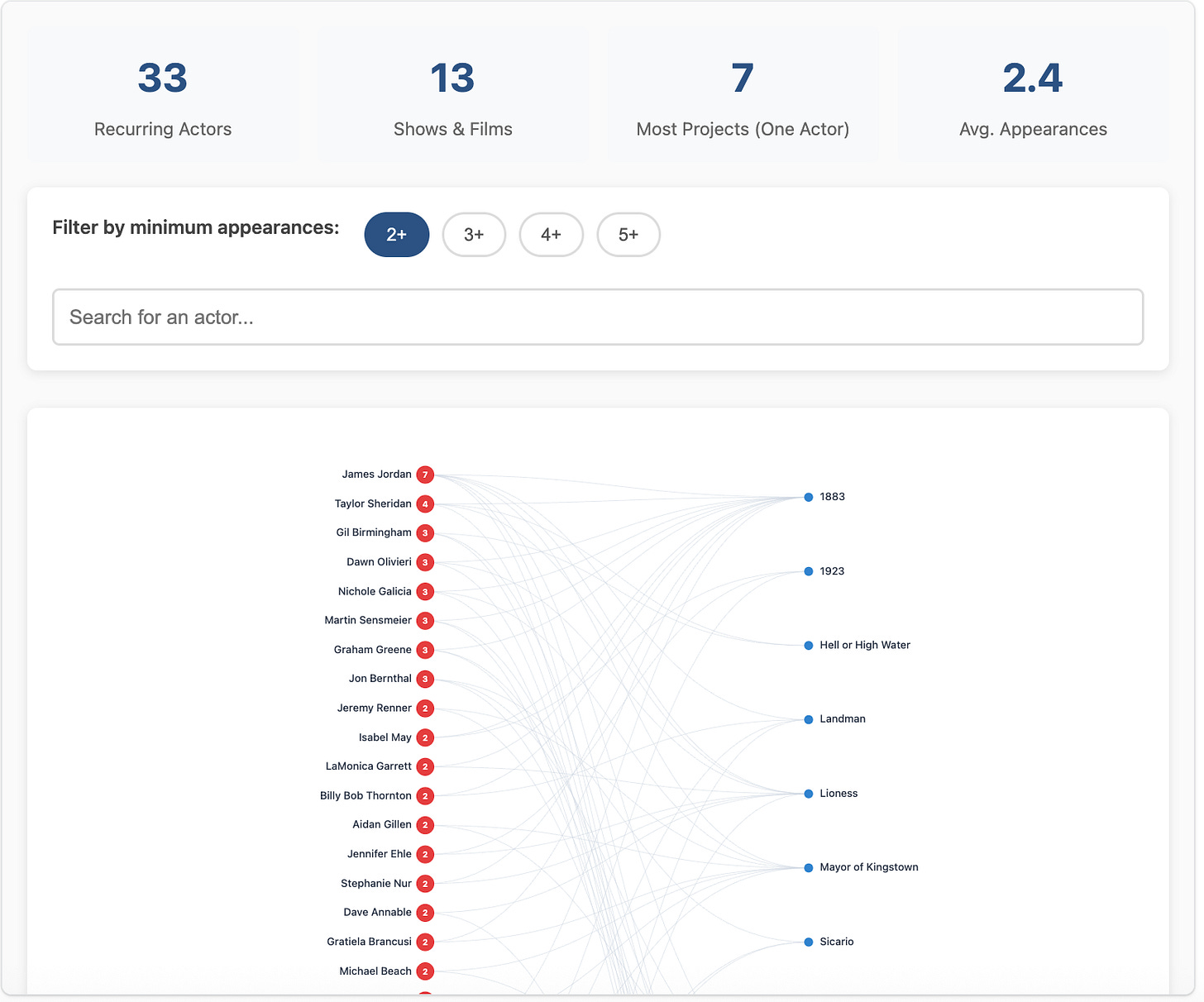

The Supporting Assets (If You Want to Go Deeper)

For the full experience—the interactive graphics, the articles, the slightly different conclusions, check out them out here.

If you’re curious, I prefer Gemini’s graphic because it’s closer to my vision. However, Claude did write a better article and added more to the graphic than I asked for. Nerd.

Final Thoughts

The good news: you all get this post much sooner than expected.

The bad news: I didn’t really get to build or analyze anything.4

I mostly guided the invisible AI hand while quietly shattering the trust in one of my most important relationships, ChatGPT, who got very obviously outmaneuvered by her competitors.

We’ll talk…

But anyways, if you’re watching a Taylor Sheridan show wondering “why do I recognize this guy?” chances are it’s James Jordan.

He leads the way with 7 appearances across Taylor Sheridan projects and was the person I recognized in Landman.

— Charlie, Managing Editor of Grantland Nostalgia

PS — Seriously check out the bonus content featuring the copycat articles and data visualizations.

Not even really for Gemini itself, but for the constellation of Google features stapled to it.

At this point, I gave up on ChatGPT for this project because it did so much less on the first pass than the other two models

Although I can’t help but to think of this chart more as “Middle School Dance”… boys on the left girls on the right…

Not totally true. I technically built the companion app, but it was about 20 minutes of effort.

Old guy would love to see the same applied to 60s (and separately 70s) TV shows. So many of the same actors on Gunsmoke, Bewitched, Perry Mason, Twilight Zone, etc. But only if you have 20 minutes.